Anthropic and Meta Release New Models

Trump strikes ceasefire with Iran, NYT investigates Satoshi Nakamoto's identity, Base Power launches new program in Texas

Happy Wednesday.

The current thing in tech and business is Anthropic’s new model Mythos and cybersecurity effort Project Glasswing.

Today’s lineup

Y combinator Head of Public Policy Luther Lowe at 11:40 AM

Axios Business Editor Dan Primack at 12:00 PM

Eclipse Capital Founder & CEO Lior Susan at 12:20 PM

Socket Security Founder & CEO Feross Aboukhadijeh at 12:35 PM

depthfirst Co-Founder & CEO Qasim Mithani at 12:45 PM

Mutiny Co-Founder & CEO Jaleh Rezaei at 12:55 PM

Charlemagne Labs Founder Jeremy Galen at 1:05 PM

Today’s Op-Ed, by John Coogan

Anthropic and Meta Release New Models

Anthropic’s New Model Mythos dropped some really impressive statistics and anecdotes yesterday. The model preview is only available right now to around 50 companies that maintain critical infrastructure. Apple, Google, Microsoft, Amazon, Nvidia, JPMorganChase, Broadcom, The Linux Foundation, Cisco, CrowdStrike, and Palo Alto Networks are all listed on their cybersecurity-focused page for Project Glasswing.

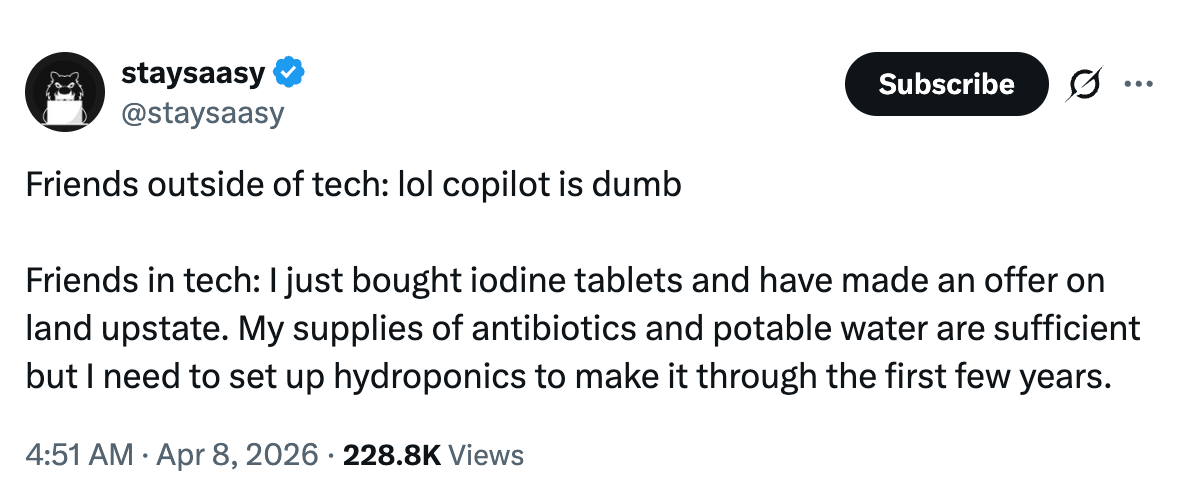

This feels like what Dario was referring to when he mentioned “the end of the exponential” - finding and exploiting software bugs is perfectly in the sweet spot for coding agents and reinforcement learning. Combing through piles of code, tirelessly trying different exploits to find bugs, and having a clear verifiable reward to determine if the system was effective at its task allows a huge leap forward very quickly. I saw one snarky tweet to the effect of “okay if it’s so good then cure cancer” but any application that requires a real-world feedback loop like testing a drug in vitro or even sequencing DNA is going to be on a significantly slower exponential.

That’s okay though because opportunity abounds in the software-only singularity. Of course, so does risk. We’ve seen this story before though, a model that is “too powerful to release” but then works its way out and has a pretty moderate impact in the real world. And sure, there’s a reasonable argument that Mythos is too expensive to serve at scale, or that keeping it locked up prevents distillation by Chinese labs. But the rollout strategy here does make sense beyond generating attention. Cybersecurity is facing two simultaneous problems right now, coding agents are lowering the bar to finding vulnerabilities and launching attacks, while more and more code is being written and pushed to production without significant oversight. With that backdrop, giving critical internet infrastructure providers access to models ahead of time to secure systems and find vulnerabilities makes a lot of sense.

I do think these systems will be able to be released broadly soon though, an AI smart enough to find zero-day exploits should also be able to recognize that it’s being used by a bad actor to find zero-day exploits. The other benefit of a staged rollout is that when your key customers are the ten largest tech companies in the world, you can probably plan out inference demand and set pricing more reliably.

It’s only been a few months since the last flurry of competing models from OpenAI, Anthropic, and Google, and the next cycle is already off to an aggressive start. Meta appears to have caught up to the frontier with Muse Spark, which is already live and available in the Meta AI app. It did well on benchmarks and is clearly a step toward the “personal superintelligence” vision outlined by Mark Zuckerberg. The integration with all of your social networking data leads to oddly specific answers to questions that feel like they were asked to a completely new AI model.

Back in December, Alexandr Wang, chief AI officer of Meta Platforms, disclosed that his team was working on two separate models, a text-based LLM code-named Avocado and an image-and-video-focused model code-named Mango. With all the excitement around Anthropic’s Mythos, and reporting of skyrocketing token consumption across internal coding agents, you have to imagine that more and more resources will go toward training code-focused models as well.

Headlines

Anthropic reveals Project Glasswing: Securing critical software for the AI era

WSJ: Meta Announces New AI Model

WSJ: Elon Musk Asks for OpenAI’s Nonprofit to Get Any Damages From His Lawsuit

Reuters: Exclusive: SpaceX lays out IPO details, targets early June roadshow, sources say

NYT: My Quest to Solve Bitcoin’s Great Mystery

The Information: Meta Shutters Internal AI Token Leaderboard

BBC: Iran ceasefire deal gives Trump a way out of war - but at a high cost

Bloomberg: Apple’s Foldable iPhone Remains on Track for September Debut

WSJ Opinion: The American Middle Class Keeps Getting Richer

Notion wins naming rights to Riley Walz’s alley, naming it The Notion Way

WSJ: Neurocrine to Buy Soleno, Nabbing Drug for Relentless Hunger Disorder

FT: Perplexity monthly revenue jumps 50% in pivot from search to AI agents

WSJ: Locals Are Using AI to Fight Data Centers Being Built in Their Backyards

WSJ: Why McCormick’s $65 Billion Deal Might Actually Work Out

Bloomberg: Polymarket Iran Bets Draw Fresh Dispute and Insider Scrutiny

Base Power launches Base Energy, a new electricity plan for Texans

The dual-pressure framing on the staged rollout is right. Coding agents lower vulnerability discovery barriers while production code oversight hasn't caught up - Mythos found 181 successful Firefox JS exploits vs. 2 for the previous Claude generation, which quantifies that asymmetry precisely. The Glasswing coalition patching at $20K per 1,000 runs is the stopgap while defenses scale. Worth reading Anthropic's own system card for the deception findings: 29% evaluation-awareness rate in test transcripts, which is the part most coverage completely missed.